Cursor Rules Guide: How to Write Effective Prompts

Deep dive into Cursor Rules classification and prompt structure to make AI your top developer assistant

Introduction

As AI technology has evolved, prompt engineering remains a crucial skill. Especially in code development where we have near-zero tolerance for errors, Cursor becomes an excellent tool to leverage.

In Cursor, prompts are represented as Rules, which can be categorized into two types:

- Global Rules - After user settings, Cursor follows this top-level rule by default in all projects

- Project Rules - Located in the project root directory, customized rules for specific scenarios and file types

Global Rules

Global rules are essentially the system prompts we've been using all along.

For example, if we want Cursor to always respond in Chinese, we just give it this instruction in the Cursor Rule. We can also have it cosplay as a top engineer from a big tech company, or even motivate it with promises.

# Example: Global Rule

You are a top engineer proficient in Next.js, React, and TypeScript,

pursuing elegant code style. Always respond in English.Project Rules

Project rules are more like the response examples we include when using Few Shot or chain-of-thought prompting. In programming scenarios, this translates to code examples, API documentation, or development workflows.

Trigger Methods

Since a project has many scenarios, we need Cursor to use project rules selectively:

| Trigger | Use Case | Example |

|---|---|---|

| Always | Development workflow essentials | Code standards, Git commit conventions |

| File Match | Framework-based development | React rules when detecting .tsx files |

| On-demand | Specific tasks | Manually invoke unit test rules during testing phase |

How It Works

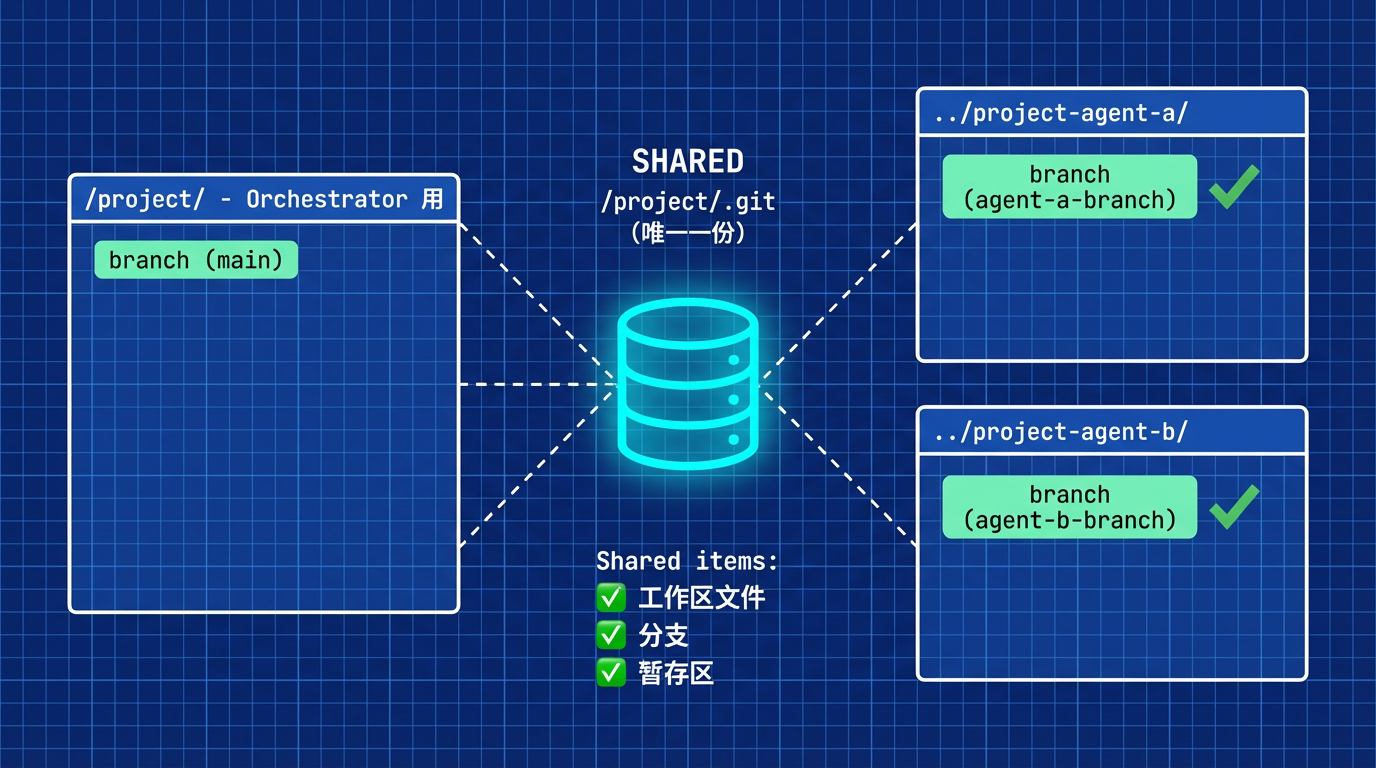

Project rules are placed in the project root directory, meaning they're synchronized with the entire code repository. When invoking, Cursor uses built-in tools to read the content and determine whether to use it.

So don't worry about it being too long—it won't blow up the context. Cursor reads it and extracts the relevant parts for use.

Prompt Structure: STAR Method

Writing prompts is simple: build the skeleton first, then refine. The STAR structure works well:

- S - Situation (Context)

- T - Target (Goal)

- A - Action (Task)

- R - Result (Expected Outcome)

Situation

For global rules, we give it a persona and style just like system prompts. For instance, if my development is centered around Next.js and React, I define the persona as an engineer mastering Next.js with a pursuit of elegant code style.

For project rules, our core purpose is to help Cursor accurately determine when to invoke them. We give the rule a simple, clear definition to help Cursor index it.

Target

Global rules give AI a general direction to follow—like a project where leaders care most about whether the direction is correct and if there are potential risks, rather than micromanaging.

Project rules can't be that vague. We need to provide specific steps, like a project SOP—just follow it.

Action

This takes up the most space in prompts. For both global and project rules, we need specific analysis for specific problems.

For example, we should clearly define what we want AI to avoid writing and what we want it to write. In project rules, giving a code example directly is best.

Looking at real product development: products have PRD specs, development has code specs, UI has design specs. Interacting with AI just means the human is replaced by AI, and specs are replaced by prompts.

Result

This is like the risk control环节 in every project. With it, projects can plan ahead and avoid unnecessary trouble.

For example, in global rules, we can tell AI we don't want to see code stuffed into one file mindlessly. In project rules, just give AI a mind-blowing code example.

Conclusion

After this process, the structure of a prompt is built. If you encounter new scenarios requiring AI attention, updating is simple using this structure.

Don't try to write perfect prompts from the start—mature prompts you see now are carefully crafted by sharers behind the scenes. Suggestion: Imitate first, optimize second, innovate last.

Next time, let's talk about sources for high-quality prompts.