2026/03/30

What Is Prompt Caching TTL?

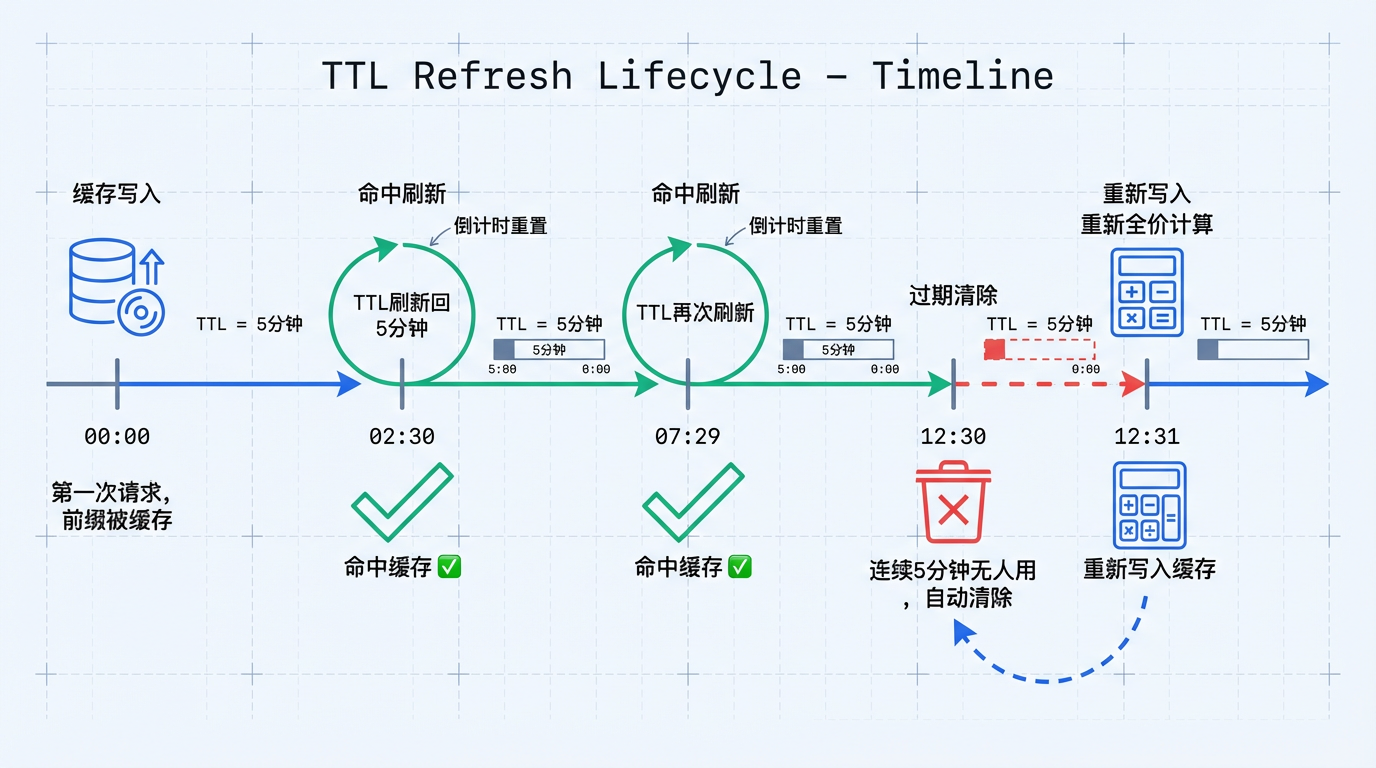

TTL is the lifetime of a prompt cache entry. Each hit refreshes it. Leave it unused for long enough, and it expires.

TTL stands for Time To Live: the amount of time a cache entry can survive without being used.

For prompt caching, TTL tells you how long a cached prefix can stay alive before the system discards it.

Start With a Concrete Example

A carton of milk has the same timing problem:

You buy milk with a shelf life of 5 days

Day 1 still fresh

Day 2 still usable

Day 5 last valid day

Day 6 expired and thrown awayPrompt cache TTL works the same way:

00:00 First request writes a prefix into cache

02:30 Another request hits the cache, TTL resets to 5 minutes

07:29 Another hit refreshes the timer again

12:30 No one uses it for 5 minutes, cache expires

12:31 A new request arrives, misses cache, and must rewrite it at full costTypical TTL Differences Across Providers

| Provider | TTL | Configurable | Refreshed on hit |

|---|---|---|---|

| Anthropic Claude | 5 minutes | No | Yes |

| OpenAI | Minutes to hours | No, opaque | Opaque |

| Google Gemini | Developer-defined, 1 hour by default | Yes | Yes |

If requests keep arriving, the cache stays alive. If usage pauses for too long, the entry expires and the next request has to write it again at full cost.