Prompt Caching vs Tool Result Replacement

Prompt caching cuts the price of repeated prefixes. Tool-result replacement shrinks the prompt itself. Both can save money, but they push on different parts of the bill.

When people try to optimize agent cost, they often reach for two ideas at the same time:

- use prompt caching

- replace large tool outputs with short placeholders

The problem is that these two strategies are not naturally aligned.

Cache Savings Depend on an Unchanged Prefix

For prefix-based systems such as Claude or OpenAI-style prompt caching, the core rule is simple: the prefix usually needs to remain byte-for-byte consistent for cache reuse to work well.

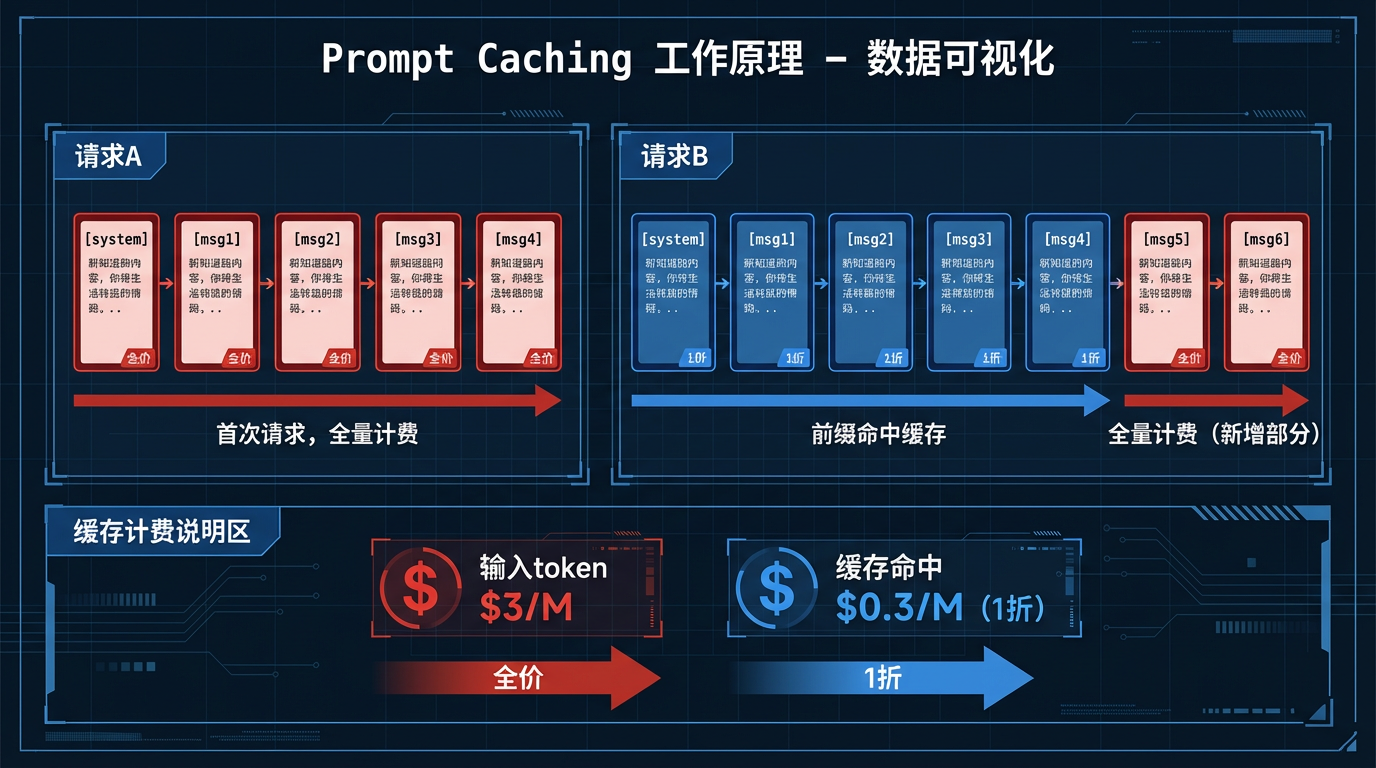

Request A:

[system][msg1][msg2][msg3][msg4]

-> first request, full price, cache write

Request B:

[system][msg1][msg2][msg3][msg4][msg5][msg6]

-> prefix hit, only the new part is typically charged at full priceNormal Chat History Already Helps Cache

Because a normal conversation usually appends new messages to the end of the old history.

Turn 1: [system][user1]

Turn 4: [system][user1][assistant2][tool3][assistant4]

Turn 5: [system][user1][assistant2][tool3][assistant4][user5]

Turn 8: [system][user1][assistant2][tool3][assistant4][user5][assistant6][tool7]Each request preserves the full prefix of the previous one, so the precondition for cache hits is often naturally satisfied.

Replacing Tool Results Changes the Old Prefix

Because you are rewriting history.

If a large tool output is replaced with a short placeholder, the historical prefix changes:

Before replacement:

[system][user1][assistant2][tool3_full_output][assistant4][user5]

After replacement:

[system][user1][assistant2][tool3_short_placeholder][assistant4][user5]From the replacement point onward, the byte sequence is different. That means later requests no longer share the same prefix with the older cached version.

That breaks the old cache path.

They Reduce Different Costs

They optimize different parts of the problem:

Strategy A: Keep History Intact and Rely on Prompt Caching

- upside: stable prefix and high cache hit potential

- downside: long tool outputs remain in context and token volume keeps growing

Strategy B: Compress or Replace Tool Results

- upside: smaller prompt size and lower raw token volume

- downside: broken cache prefixes and a likely full-price reset on the next round

So the two strategies often pull in opposite directions.

Which One Wins Depends on Size and Remaining Turns

The deciding variables are:

- how large the tool output is

- how many more turns the conversation is likely to have

A rough rule of thumb:

| Situation | Better strategy |

|---|---|

| Large tool output and many future turns | Prefer replacement |

| Small tool output but many future turns | Prefer keeping it and using cache |

| Conversation is nearly over | Prefer keeping it and using cache |

| Tool calls are extremely frequent and history grows fast | Prefer replacement |

If the tool output is huge and the conversation is going to continue for a while, replacement often wins. If the output is small or the chat is nearly over, leaving the history intact usually gets more out of caching.